The Ultimate AI Cloud

The Ultimate AI Cloud

Build and scale AI faster on a cloud engineered from silicon to API.

From training to inference. On your terms

Faster time to AI value

From zero to clusters in minutes, with built-in repeatability and self-service access.

Raw power. No surprises

Custom hardware with non-virtualized GPUs & InfiniBand — with industry-leading MTBF/MTTR.

Any user. Any workload

Built from the ground-up for AI developers with built-in MLOps tooling, serverless and managed inference.

Elastic at any stage

From small experiments to global-scale environments with flexible consumption options.

From training to inference. On your terms

Trusted by the entire AI ecosystem

Built by builders

Hear from Nebius customers

Revolut

Revolut’s Production AI Playbook: Agents, New Processes, and Nebius Token Factory

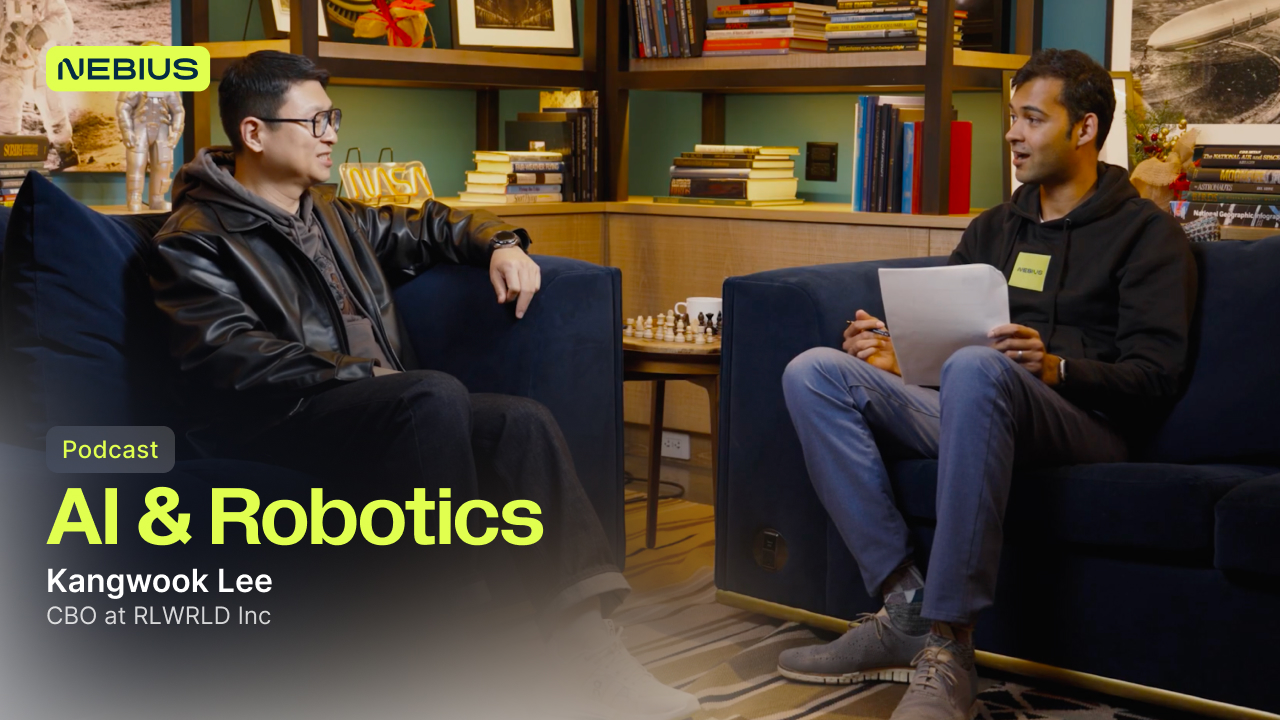

Roboforce

Building Robo-Labor: How RoboForce is turning physical AI into work humans shouldn’t have to do

Shopify

Inside Shopify’s AI Foundation Model Training on Nebius

Hear it from our customers

Revolut

Revolut’s Production AI Playbook: Agents, New Processes, and Nebius Token Factory

Roboforce

Building Robo-Labor: How RoboForce is turning physical AI into work humans shouldn’t have to do

Shopify

Inside Shopify’s AI Foundation Model Training on Nebius

Scaling AI wellbeing support

“Designing Dawn required access to specialized infrastructure, health-specific governance, genuine clinical expertise, and a lot of specialized data. It requires clinical-grade reasoning from a highly capable model, but also system stability, reliability and consistency across every single interaction. We want every conversation to build long-term trust.”

model parameters powering Dawn in production

P99 latency window for safety-sensitive conversations

AI-guided sessions delivered

by Sword Health in 2025

Building Robo-Labor for the physical AI era

To build deployable Robo-Labor for dirty, dangerous and repetitive industrial work, RoboForce runs its Physical AI workflow on Nebius AI Cloud with NVIDIA Blackwell infrastructure, scalable storage and expert engineering support. Nebius helps RoboForce connect robotic data, simulation, training, evaluation and deployment into a faster learning loop — reducing AI setup pipeline time by 70% and cutting iteration cycles from months to days.

reduction in AI setup pipeline time with Nebius

reduction in model iteration cycles

manipulation precision for TITAN

in demanding industrial environments

Training gen AI foundational model

“Nebius stands out when compared to other clouds, especially if you’re looking for flexibility and quick support. On the scalability front, they always have GPUs available, so no frustrating delays waiting for quotas. The storage speed on Nebius is also higher in comparison to some other clouds. Nebius offers more control, better support and reliable scalability compared to some other clouds.”

model parameters

to DALL·E 3 with 49% preference

on PartiPrompts benchmark

preference over Midjourney v6

on the same benchmark

Running large-scale training with Slurm

“Nebius provided the reliable infrastructure we needed to scale training seamlessly, saving significant engineering time. The team’s deep expertise and SLURM clusters helped us overcome early challenges and focus on building better models faster. ”

training runs executed without interruption

precision for model compilation, optimizing speed and efficiency

of data storage usage

Scaling AI Inference for safer banking

To support growth from 30 million to more than 70 million users, Revolut rebuilt core workflows around AI on Nebius AI Cloud. Running on 200+ NVIDIA H100 GPUs and Nebius Token Factory, Revolut powers FinCrime agents, chat orchestration and PRAGMA, its event-based transaction model. Nebius helps Revolut scale reliable, observable inference and training across high-stakes workloads — preventing millions of fraudulent transactions and handling up to 1.2 million support tickets each month.

chats completed without human intervention

chat tickets per month on Nebius Token Factory

of fraudulent transactions prevented

Scaling AI wellbeing support

“Designing Dawn required access to specialized infrastructure, health-specific governance, genuine clinical expertise, and a lot of specialized data. It requires clinical-grade reasoning from a highly capable model, but also system stability, reliability and consistency across every single interaction. We want every conversation to build long-term trust.”

model parameters powering Dawn in production

P99 latency window for safety-sensitive conversations

AI-guided sessions delivered

by Sword Health in 2025

Building Robo-Labor for the physical AI era

To build deployable Robo-Labor for dirty, dangerous and repetitive industrial work, RoboForce runs its Physical AI workflow on Nebius AI Cloud with NVIDIA Blackwell infrastructure, scalable storage and expert engineering support. Nebius helps RoboForce connect robotic data, simulation, training, evaluation and deployment into a faster learning loop — reducing AI setup pipeline time by 70% and cutting iteration cycles from months to days.

reduction in AI setup pipeline time with Nebius

reduction in model iteration cycles

manipulation precision for TITAN

in demanding industrial environments

Training gen AI foundational model

“Nebius stands out when compared to other clouds, especially if you’re looking for flexibility and quick support. On the scalability front, they always have GPUs available, so no frustrating delays waiting for quotas. The storage speed on Nebius is also higher in comparison to some other clouds. Nebius offers more control, better support and reliable scalability compared to some other clouds.”

model parameters

to DALL·E 3 with 49% preference

on PartiPrompts benchmark

preference over Midjourney v6

on the same benchmark

Running large-scale training with Slurm

“Nebius provided the reliable infrastructure we needed to scale training seamlessly, saving significant engineering time. The team’s deep expertise and SLURM clusters helped us overcome early challenges and focus on building better models faster. ”

training runs executed without interruption

precision for model compilation, optimizing speed and efficiency

of data storage usage

Scaling AI Inference for safer banking

To support growth from 30 million to more than 70 million users, Revolut rebuilt core workflows around AI on Nebius AI Cloud. Running on 200+ NVIDIA H100 GPUs and Nebius Token Factory, Revolut powers FinCrime agents, chat orchestration and PRAGMA, its event-based transaction model. Nebius helps Revolut scale reliable, observable inference and training across high-stakes workloads — preventing millions of fraudulent transactions and handling up to 1.2 million support tickets each month.

chats completed without human intervention

chat tickets per month on Nebius Token Factory

of fraudulent transactions prevented

Scaling AI wellbeing support

“Designing Dawn required access to specialized infrastructure, health-specific governance, genuine clinical expertise, and a lot of specialized data. It requires clinical-grade reasoning from a highly capable model, but also system stability, reliability and consistency across every single interaction. We want every conversation to build long-term trust.”

model parameters powering Dawn in production

P99 latency window for safety-sensitive conversations

AI-guided sessions delivered

by Sword Health in 2025

Building Robo-Labor for the physical AI era

To build deployable Robo-Labor for dirty, dangerous and repetitive industrial work, RoboForce runs its Physical AI workflow on Nebius AI Cloud with NVIDIA Blackwell infrastructure, scalable storage and expert engineering support. Nebius helps RoboForce connect robotic data, simulation, training, evaluation and deployment into a faster learning loop — reducing AI setup pipeline time by 70% and cutting iteration cycles from months to days.

reduction in AI setup pipeline time with Nebius

reduction in model iteration cycles

manipulation precision for TITAN

in demanding industrial environments

Training gen AI foundational model

“Nebius stands out when compared to other clouds, especially if you’re looking for flexibility and quick support. On the scalability front, they always have GPUs available, so no frustrating delays waiting for quotas. The storage speed on Nebius is also higher in comparison to some other clouds. Nebius offers more control, better support and reliable scalability compared to some other clouds.”

model parameters

to DALL·E 3 with 49% preference

on PartiPrompts benchmark

preference over Midjourney v6

on the same benchmark

Running large-scale training with Slurm

“Nebius provided the reliable infrastructure we needed to scale training seamlessly, saving significant engineering time. The team’s deep expertise and SLURM clusters helped us overcome early challenges and focus on building better models faster. ”

training runs executed without interruption

precision for model compilation, optimizing speed and efficiency

of data storage usage

Scaling AI Inference for safer banking

To support growth from 30 million to more than 70 million users, Revolut rebuilt core workflows around AI on Nebius AI Cloud. Running on 200+ NVIDIA H100 GPUs and Nebius Token Factory, Revolut powers FinCrime agents, chat orchestration and PRAGMA, its event-based transaction model. Nebius helps Revolut scale reliable, observable inference and training across high-stakes workloads — preventing millions of fraudulent transactions and handling up to 1.2 million support tickets each month.

chats completed without human intervention

chat tickets per month on Nebius Token Factory

of fraudulent transactions prevented

Reliable performance at scale

Reference Platform NVIDIA Cloud Partner

Reference Platform NVIDIA Cloud Partner

Nebius takes a significant leap forward, elevating its NVIDIA Partner Network preferred status to Reference Platform Cloud Partner, solidifying its position as a trusted leader in cloud innovation. The Reference Platform NCP is designated for select partners who operate large clusters built in coordination with NVIDIA, and adhere to a tested and optimized reference architecture.